Artificial Intelligence (AI) and Machine Learning (ML) will hold the key place in the huge transformation of the Automotive Industry by integrating it into designing autonomous cars. Together with other areas like supply chain management, manufacturing operations, mobility services, image, and video analytics, audio analytics plays an important role to make self-driving cars a success. With the advance technological developments and innovations, an automotive industry is reshaping and adopting the new technologies. Audio analytics has drastically changed the car companies’ focus on their product to improve the customer satisfaction. The global market size of autonomous cars will grow up to 60 billion USD by 2030.

Audio Analytics under Machine Learning in driverless cars consist of Audio classification, NLP, voice/speech and sound recognition. Voice and speech recognition has become an integral part of the autonomous cars and automotive industry. In the previous models of cars, voice and speech recognition was a challenge because of lack of efficient algorithms, reliable connectivity, and processing power at the edge. Also, in-car cabin noise reduced the performance of the audio analytics which resulted in the false recognition.

Audio analytics in machines has been a subject of constant research for a long time. With the technological advancement, the new products that are in the market like Amazon’s Alexa and Apple’s Siri use the key benefits of cloud technology that other recognition systems lacked previously. However, for these systems to work smoothly in autonomous cars will require uninterrupted wireless internet service.

Recently, various Machine Learning algorithms like kNN (K Nearest Neighbour), SVM (Support Vector Machine), EBT (Ensemble Bagged Trees), Deep Neural Networks (DNN), and Natural Language Processing (NLP) are widely used in Audio Analytics.

Various audio features are being extracted from the raw audio and given to Machine learning or Deep learning algorithms as an input.

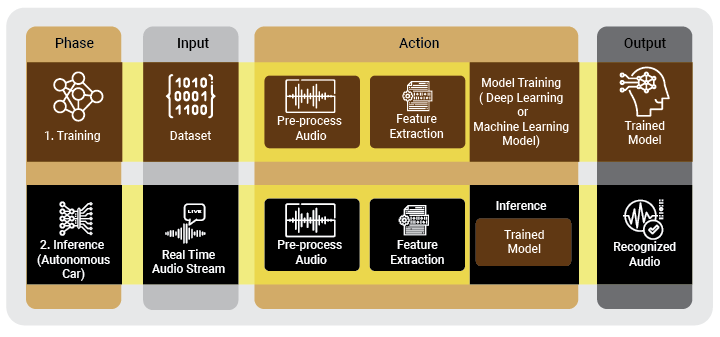

For audio analytics, as shown in below diagram, audio data is pre-processed in order to remove the noise, and then the audio feature will be extracted from the audio data. The audio features such as MFCC (Mel-frequency cepstral coefficient) as well as statistical features like Kurtosis, Variance are used here. The frequency bands of MFCC are equally spaced on the Mel scale, which is very close to the response of the human auditory system. For the same reason, the model is trained using this feature. Finally the trained model is used for inference, a real-time audio stream is taken from the multiple microphones installed in the car which is then pre-processed and the features will be extracted. The extracted feature will be passed to the trained model in order to correctly recognize the audio which will be useful for making the right decision in autonomous cars.

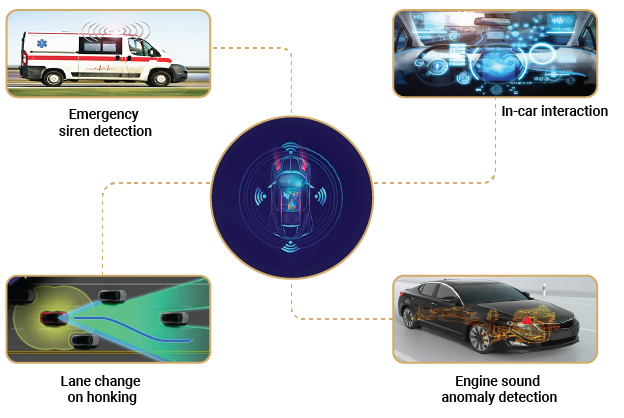

Let us see few use cases developed for autonomous cars using audio analytics

With new technologies, end user’s trust is the key point and NLP is a game-changer to build this trust in autonomous cars. NLP allows passengers to control the car using voice commands, such as asking for stopping at a restaurant, change the route, stopping at the nearest mall, switching on/off lights, open and close the doors, and many more. This makes the passenger experience rich and interactive.

- Emergency siren detection

The sound of the siren of any emergency vehicle such as an ambulance, fire truck, or police car can be detected using the various deep learning models as well as machine learning models like SVM (support vector machine). The supervised learning model – SVM is used for classification and regression analysis. The SVM classification model is trained using huge data of the emergency siren sound and non-emergency sounds. With this model, the system is developed which identifies the siren sound in order to make appropriate decisions for an autonomous car to avoid any dangerous situation. With this detection system, an autonomous car can make the decision to pull over and give a way for the emergency vehicle to pass.

- Engine sound anomaly detection

Automatic early detection of a possible engine failure could be an essential feature for an autonomous car. The car engine makes a certain sound when it works under normal conditions and makes a different sound when there are some problems/faults. Many machine learning algorithms available among K-means clustering can be used to detect anomalies in engine sound. In k-means clustering, each data point of sound is assigned to the k group of clusters. Assignment of the datapoint is based on the mean which is near to the centroid of that cluster. In the case of the anomalous engine sound, the datapoint will fall outside of the normal cluster and will be a part of the anomalous cluster. With this model, the health of the engine can constantly be monitored and if there is any anomalous sound event, then an autonomous car can warn the user and help make proper decisions to avoid any dangerous situation. This can avoid complete failure/break-down of the engine.

- Lane change on honking

For an autonomous car to work exactly as a human-driven car, it must work effectively in the scenario where it is mandatory to change its lane when the vehicle from behind needs to pass urgently indicated with honking. Random forest, a machine learning algorithm will be best suited for this type of classification problem. It is a supervised classification algorithm. As its name suggests, it will create the forest of decision trees and finally merge all the decision trees to get an accurate classification. A system can be developed using this model which will identify the certain pattern of horn and take the decision accordingly.

- In-car interaction for autonomous cars

NLP (Natural Language Processing) processes the human language to extract the meaning which can help to make decisions. Rather than just giving commands, the occupant can actually speak to the self-driving car. Suppose you have assigned your autonomous car a name like Adriana, then you can say to your car “Adriana, take me to my favorite coffee shop”. This is still a simple sentence to understand but we can also make the autonomous car understand even more complex sentences such as “take me to my favorite coffee shop and before reaching there, stop at Jim’s home and pick him up”. There are a lot more things that you can interact with in your car. However, self-driving cars should not obey the owner’s instructions blindly to avoid any dangerous situations such as death and life situations. In order to do so, autonomous cars need a more powerful NLP which actually interprets what humans have told and it can echo back the consequences of that.

Machine Learning-based audio analytics is thus attributed to the increasing popularity of autonomous cars being safe and reliable. At VOLANSYS, we help automotive to develop custom solutions based on ML technologies like audio analytics, NLP, voice recognition, and more, enhancing passenger experience, on-road safety, and timely engine maintenance of automobiles. Our ML experts have a skillset working on diversified forms of data ranging from numbers, audio, text, video to images using efficient frameworks, data analysis, and visualization tools.

Read our success stories to know how VOLANSYS designs Machine Learning based solutions for Automotive and other industries.

About the Author: Priyanshu Makhiyaviya

Priyanshu Makhiyaviya is associated with VOLANSYS Technologies as Sr. Firmware Engineer. He has vast experience working on technologies like Machine Learning, Deep Learning on various Edge platforms, Digital Audio broadcasting standards for Automotive infotainment and other domains.