Why do you need a docker? For instance, a code written in Python on your local system might not run on another system or environment. This is due to the unique version of the library you used. To overcome this issue, docker comes to the rescue.

Docker is a platform as a service product to deploy applications. It provides the user the OS-level virtualization to separate applications from other environments and provide software as a package called a container. Containers are separated from one another. It contains its own dependencies.

To understand docker in detail, it’s important to know about a virtual machine.

What is a VM?

A Virtual Machine(VM) is a server that emulates a physical server. A VM emulates the same environment or configuration in which you install your applications on the system’s physical hardware. Depending on your use case, you can use a system virtual machine or process virtual machines. VM lets you execute computer applications or programs alone in the environment.

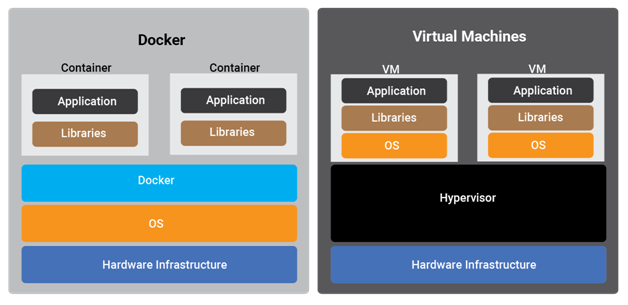

Difference Between Docker and Virtual Machines

When compared to any VMs, the Docker containers move up the abstraction of resources from the hardware level to the OS level. This will allow the various benefits of using Docker containers like application portability, dependency management, independent microservices, easy monitoring of the application, etc.

In other words, while VMs are the abstraction of the entire hardware server, containers are the abstraction of the Operating System kernel. This whole different approach to virtualization results in a much faster and more lightweight instance.

What is Container?

Docker container is a standardized unit of the executable package which can be created easily to deploy an application or create a new environment for your application. It could be an OS container like Ubuntu, CentOS, etc. or It can be an application-oriented container like CakePHP container, Python-Flask container, etc.

Using Docker containers, users can set up as many containers of a particular application or can deploy more than one application in one container. A Docker user can create as many copies of its container as the user wants for high availability or scale-up process

More containers can be run on the same hardware compared to a VM as containers are lightweight and use the same OS kernel.

What is Docker Image?

Docker Image is a template that helps to create Docker containers or one can say the image of a container. They are the building blocks that contain the set of instructions for creating a Docker container. The Docker images are created by writing the Dockerfile which includes the commands to create a Docker image and it can be created by executing the docker build commands. Docker containers can be created by running the run command.

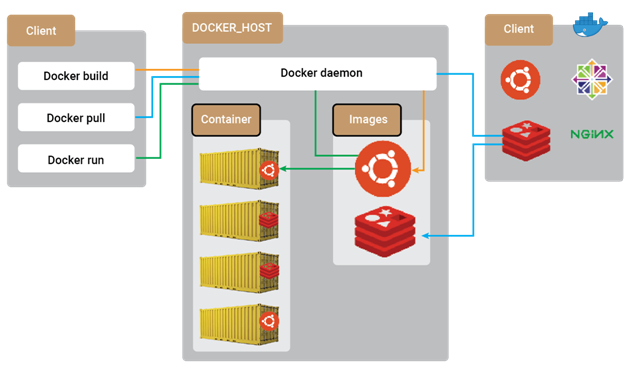

Docker Architecture

Understanding Docker architecture makes it easy to understand containerized application architecture. Docker uses server-client architecture. There are mainly two components in architecture: Docker daemon and Docker client. Docker client communicates to docker daemon with REST API. Users can set up Docker daemon and Docker client on the same server or can separate that by deploying on different servers. Docker daemon manages all running containers and the task of building a new container.

- The Docker Daemon:- Docker Daemon manages all the components in Docker architecture. It manages Docker images, containers, and volumes that are attached to the containers. It can also communicate with other Docker daemons

- The Docker Client:- Users interact with Docker clients. When the user runs any docker command, the Docker client sends that command to the Docker daemon. The Docker client and daemon can be deployed on the same system, or the user can deploy Docker daemon on the remote system

- Docker Registries:- Docker images are stored in Docker registries. Docker Hub is the public Docker registry that everyone can access. In other words, Docker registries are services that provide the public and private registry from where you can store and fetch images. Users can log in to Docker Hub and can create their private or public registry. Users can pull Docker images from Docker Hub and create their containers

Importance of Docker and its Applications

The Docker goal is to make software development, application deployment, and business agility easy, fast, and reliable with the containers. With Docker, we can bundle our applications with all dependencies and deploy them on any hardware regardless of any different operating system. It’s very easy to move containerized applications to different environments as its no outer dependencies architecture. Below are some applications of Docker.

- Deployment of production-level applications on software development

- Autoscale on equivalent hardware supported the utilization of the application

- Easy Code Pipeline management

- Easy code testing with the same production replicated environment

- Easy Docker image fetches with Docker Hub

- Easy integration with completely different DevOps tools like bitbucket pipelines, git actions, AWS codebuild, AWS codedeploy, Jenkins, etc.

What is Docker Orchestration?

Docker orchestration automates all aspects of the readying, management, scaling, and networking of containers. It will be employed in any setting wherever you employ longshoreman containers. It will assist you to deploy a similar application or configuration across totally different environments with no need to alter it and manage the life cycle of containers and their dynamic environments. Those areas unit the tasks which will be a pain to manage manually. Below are the things that can be automated using Docker Orchestration.

- Provisioning, deployment, and deletion of containers

- Movement of containers from one host to another if there is a memory or CPU utilization issue with the host

- Load balancing between containers

- High availability and scalability

- Health monitoring of containers, hosts, and applications by different matrices

- Efficient allocation of resources between containers

- Redundancy and availability of containers

Docker Swarm is a Docker orchestration tool. It can package and run applications in Docker containers, find existing container images from public or private repositories and deploy a container on any device in any environment.

Orchestration tools for Docker include the following:

- Docker Machine :- Installs Docker Engine on VM

- Docker Swarm:- Create a cluster of multiple Docker containers under a single host

- Docker Compose:- Deploy multi-container applications and manage connectivity of the containers with one another

Benefits of Containerized Orchestration Tools

- Increased portability:- With a few commands users can replicate its entire application on other hardware. It is easy to scale and destroy and manage dependencies

- Simple and fast deployment:- Can create new containers of application to address growing traffic

- Enhanced productivity:- Simplified deployment and process management and decrease dependency

- Improved security:- Applications are isolated from other applications so it removes the interference of other applications

How VOLANSYS Can Help

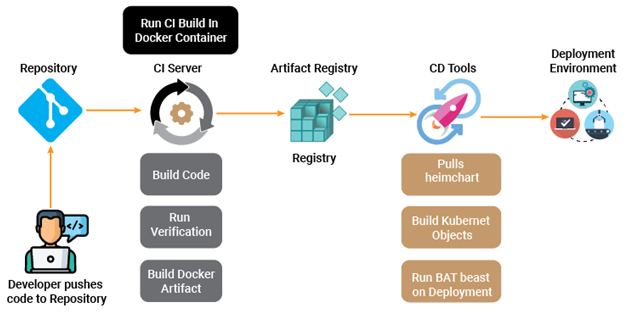

- Microservices are designed as containerized applications i.e. applications that can run in a containerized environment

- We define one docker base image for every platform for instance JAVA. It is easy to maintain, scale, and update if any vulnerabilities are found

- Once the developer commits the code, it triggers a CI run on Jenkins

- In the build section of the above image, Jenkins is responsible for building the application’s executable as well as docker image using it. Jenkins does not have any static slave, that is we use docker containers on the Kubernetes cluster to run the Jenkins workload

- Once the docker image is pushed to the artifactory it will trigger automated deployment if the target environment is dev. For other environments, we will need to trigger the process. Docker image is run under a Kubernetes pod

VOLANSYS as a trusted DevOps services provider, has expertise in DevOps workflow automation, continuous integration and deployment pipeline, microservices architecture and dockerization. Read our success stories to know how VOLANSYS helps OEMs and enterprises to embark upon their digital transformation journey by delivering DevOps services.

About the Author: Chintal Shah

Chintal Shah is associated with VOLANSYS Technologies as Associate Project Manager. He is having 10+ experience working in Managed services domain like DevOps, CloudOps, and Network Operations. He has also been involved in clients’ business requirements specific to each industry, project management skills, and providing infrastructure solutions.